|

TRANSLATE THIS ARTICLE

Integral World: Exploring Theories of Everything

An independent forum for a critical discussion of the integral philosophy of Ken Wilber

Frank Visser, graduated as a psychologist of culture and religion, founded IntegralWorld in 1997. He worked as production manager for various publishing houses and as service manager for various internet companies and lives in Amsterdam. Books: Ken Wilber: Thought as Passion (SUNY, 2003), and The Corona Conspiracy: Combatting Disinformation about the Coronavirus (Kindle, 2020). Frank Visser, graduated as a psychologist of culture and religion, founded IntegralWorld in 1997. He worked as production manager for various publishing houses and as service manager for various internet companies and lives in Amsterdam. Books: Ken Wilber: Thought as Passion (SUNY, 2003), and The Corona Conspiracy: Combatting Disinformation about the Coronavirus (Kindle, 2020).

Check out my other conversations with ChatGPT Are All LLMs Offshoots of OpenAI?A Comparative Analysis of Today's Leading Models and Their TrajectoriesFrank Visser / ChatGPT

Introduction: A Common MisconceptionIt is tempting to assume that all large language models (LLMs) are somehow derived from OpenAI, given the visibility of ChatGPT and the early public impact of GPT-3. However, this assumption does not withstand scrutiny. While OpenAI played a catalytic role in popularizing LLMs, the field is better understood as a competitive and rapidly diversifying ecosystem built on shared scientific foundations rather than direct lineage. At the core of nearly all modern LLMs lies the Transformer architecture, introduced in 2017 by researchers at Google. This architecture is the true common ancestor—not OpenAI itself. The Major Families of LLMsOpenAI: Iterative Scaling and Product IntegrationOpenAI's GPT series (e.g., GPT-4 and later iterations) emphasizes: • Strong general reasoning performance • Tight integration with consumer-facing tools (ChatGPT, APIs) • Reinforcement learning from human feedback (RLHF) for alignment Functionally, OpenAI models tend to excel at structured reasoning, coding, and multi-domain synthesis. Their ecosystem strategy prioritizes usability and broad deployment. Google DeepMind: Multimodality and Knowledge IntegrationModels such as Gemini (successor to Bard) represent Google DeepMind's approach: • Deep integration with search and real-time data • Native multimodality (text, images, video, code) • Emphasis on factual grounding and tool use Compared to OpenAI, Google's models are more tightly coupled to its information infrastructure, aiming to merge LLMs with search engines rather than replace them. Anthropic: Alignment and Constitutional AIAnthropic produces the Claude family, which differs in its philosophical and technical orientation: • Focus on safety and interpretability • Use of “constitutional AI” instead of heavy RLHF • Longer context windows (handling large documents) Functionally, Claude models often excel in careful reasoning, document analysis, and maintaining coherent long-form discourse. Meta: Open-Weight Ecosystem and Research AccessibilityMeta's LLaMA models are significant for a different reason: • Open-weight (or partially open) distribution • Encouragement of academic and independent experimentation • Rapid adaptation by the open-source community Unlike proprietary systems, LLaMA-derived models have spawned countless fine-tuned variants, making Meta a central player in democratizing LLM technology. Mistral and Emerging European PlayersCompanies like Mistral AI (with models such as Mistral and Mixtral) represent a European push: • Efficient architectures (smaller, faster models) • Competitive performance with fewer parameters • Open or semi-open distribution strategies Their functional edge lies in efficiency—delivering strong performance at lower computational cost. Other Notable Systems• Cohere: enterprise-focused language models optimized for business workflows • xAI with Grok: emphasizes real-time information and integration with social platforms • Alibaba and Baidu: developing regionally dominant LLMs tailored to Chinese language and regulatory contexts Functional Differences: What Actually Sets Them Apart?Despite architectural similarities, current LLMs differ along several technical and operational axes. 1. Training Data and CurationDifferent datasets lead to distinct “world models.” Some prioritize web-scale breadth, others curated or domain-specific corpora. 2. Alignment Methods• OpenAI: RLHF • Anthropic: Constitutional AI • Others: hybrid or emerging techniques This affects tone, safety boundaries, and interpretability. 3. Context Window SizeSome models (notably Claude) can process hundreds of pages at once, while others are optimized for shorter interactions. 4. Tool Use and IntegrationGoogle models integrate deeply with search; OpenAI emphasizes API extensibility; enterprise models integrate with proprietary data systems. 5. MultimodalityHandling of images, audio, and video varies widely. Gemini leads in native multimodal design, while others have added it incrementally. 6. Openness vs. Proprietary ControlMeta and Mistral lean toward openness; OpenAI and Anthropic maintain tighter control. This shapes innovation patterns across the ecosystem. Are They “Offshoots”? A More Accurate AnalogyRather than a family tree with OpenAI at the root, the LLM landscape resembles a convergent evolution scenario. Multiple organizations independently build on: • The Transformer architecture • Advances in GPU/TPU computing • Large-scale data training techniques In that sense, OpenAI is a prominent lineage, not the progenitor of all others. The Future of LLMsSeveral trends are already visible and likely to intensify. 1. From Language Models to General AgentsLLMs are evolving into systems that can plan, act, and use tools autonomously—blurring the line between chatbot and software agent. 2. Increasing MultimodalityFuture models will seamlessly integrate text, vision, audio, and possibly real-world sensor data. 3. Efficiency over ScaleThe era of “bigger is always better” is giving way to optimization. Smaller, specialized models will rival larger ones in many tasks. 4. Personalization and On-Device AIModels will increasingly run locally, adapting to individual users while preserving privacy. 5. Fragmentation of the EcosystemRather than one dominant model, we are likely to see a pluralistic landscape: • Enterprise models • Open-source ecosystems • Region-specific AI systems 6. Alignment as a Central ProblemEnsuring that LLMs behave reliably, truthfully, and safely will remain the defining technical and philosophical challenge. Conclusion: A Competitive Ecology, Not a MonocultureLLMs are not offshoots of OpenAI but products of a shared paradigm that multiple actors have developed in parallel. What differentiates them today is less their underlying architecture and more their training philosophy, integration strategy, and intended use cases. The future will not belong to a single model or company. Instead, it will resemble a complex ecology—diverse, specialized, and shaped as much by social and economic forces as by technical innovation. A Deeper Dive

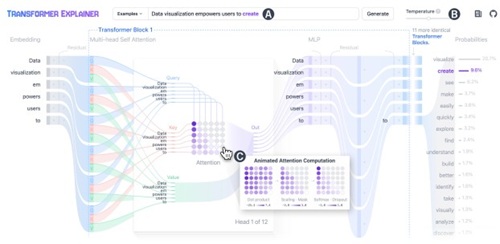

TRANSFORMER EXPLAINER: Interactive Learning of Text-Generative Models (PDF)

Comment Form is loading comments...

|